Live Experiment:

How to sort through conflicting advice, increase open rates and reduce unsubscribes during your product launch

Let’s say you are about to launch a product and you have 3 paralysing problems:

Problem #1: You get conflicting advice. And all three are subject-matter experts you know, like and trust.

Problem #2: Your open rates plummeted from 68% on average to 30% because you focussed on other aspects of business and went MIA from your subscribers' inboxes for a few months.

Let’s put those percentages in perspective:

If you have 1000 subscribers but only 30% regularly open your emails, you don’t have 1000 subscribers. You have 300.

It doesn’t matter how big your email list is, your average open rates dropping from 68% to 30% on the eve of a launch is catastrophic.

That’s like waking up one day to see more than half of your email list... gone.

If people don’t open your emails, you can’t sell. Period.

Problem #3: Typically, a lot of readers unsubscribe during a launch because they get overwhelmed with (read: sick of) all the marketing emails hitting their inboxes.

What most experts don’t openly advertise is that your list will actually shrink during a product launch. (Unless you do sufficient outward facing promotion - through Facebook ads, affiliates etc. - to bring in more people than are leaking out.)

That’s like spending months extracting water from desert plants and somebody opening a hole on the bottom of your flask when you’re finally ready to drink.

Since those are the top 3 pain points in my business right now, I'm going to do something about it. And I'm going to do something fun.

I'm going to run a series of experiments over the next three months and report the results live on the blog.

True to the blog’s new name “Trial and Eureka”. 🙂

Here are our plans.

I'm maniacally focussed on finding a launch system that sells, that doesn’t take every waking hour to set up and that reflects my core values.

So, here’s the plan:

I’m going to launch the same course (“Fast50”) 3 different times using 3 different launch systems.

Then I will take you behind the scenes and share the results with you.

(You can read more about that here.)

But an experiment like this brings up three obvious challenges:

Issue #1: How do you isolate the launches from each other so you can get reliable data?

The other two launches shouldn't benefit from the hype (or perhaps: burnout) created by the earlier launches.

Issue #2: How do you do three launches back to back without burning out your list?

Issue #3: How do you solve the open rate and unsubscription problems?

Testing 3 different launch systems to see which actually performs better solves the problem of conflicting advice.

The trouble is... it actually exacerbates the other two problems. Less and less people will open the emails while more and more people will unsubscribe as they get fed up with launch emails.

It is a zero-sum tug of war.

Tries to Resolve Conflicting Advice -------- versus -------- Tries to Solve Problem of Open Rates and Unsubscription

What to do?

Well...

If you can’t win the game, change the rules.

So, here is how I’m designing the experiment and my thought process behind those design decisions.

Let’s take each issue in turn.

Issue #1: How to isolate the launches from each other so we get reliable data

The idea is simple: I will divide my list into 3 random groups. Each group will only see one of these launch sequences.

The execution, however, gets a bit more complicated...

It calls for some meticulous marketing automation, which we’ll look at in a later article.

First though, let’s map out WHAT we want to accomplish (the big picture) before we get bogged down in the HOW (the marketing automation).

What do you do with people who asked to observe all three launch sequences?

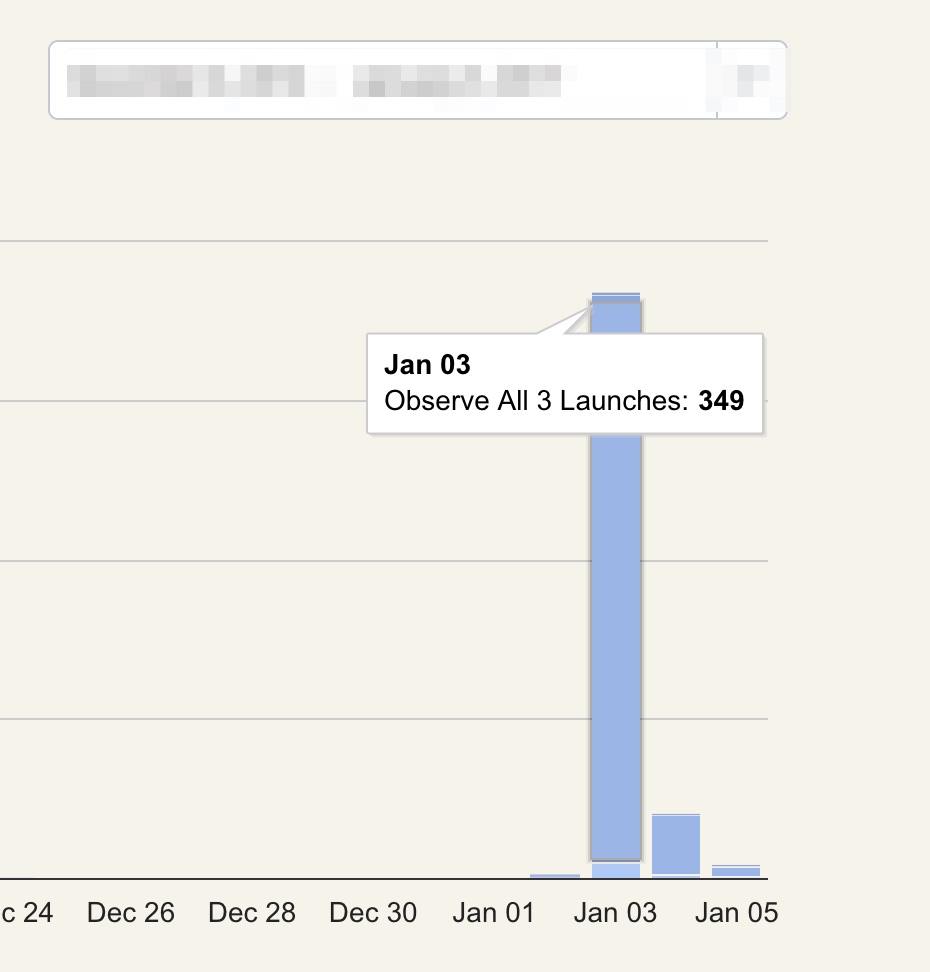

[392] people chose to observe all three launches (hereafter “Observers”). For the purposes of this experiment, we won’t take their results into account. Their purchases won’t count towards the tally.

To do this, I’m segmenting them off. They won’t receive the original broadcasts from the three launches. They’ll get a copy of each broadcast in their own little corner.

So every launch email goes out twice:

- The “original” broadcast goes to the random pool earmarked for that launch.

- A “copy” of the same broadcast goes to the Observers.

Think of it like serving the same food in two different cafeterias on campus.

This allows me to track the metrics of each group (Launch 1 vs Launch 2 vs Launch 3 vs Observers).

How are the random groups chosen?

I take my existing subscribers and subtract anyone who opted in to become an Observer. That gives us the experiment pool:

Existing List - (Observers) = Experiment Pool

Then on January 9th, I’ll just divide that pool into three random groups of equal size. Each group gets earmarked in my system to one of the three launches:

- Experiment Pool / 3 = Launch Group #1

- Experiment Pool / 3 = Launch Group #2

- Experiment Pool / 3 = Launch Group #3

Who exactly sees a particular launch then?

Launch #1: Bushra Azhar’s Sold Out Launch System (“SOL”)

The only people who will receive the SOL launch sequence are:

- Existing subscribers earmarked to Launch 1 on January 9th.

- Any new subscribers the SOL launch brings in (through Facebook ads, affiliates etc). These are automatically earmarked into Launch 1 and won’t receive Launches 2 & 3.

The Observers will receive copies of all the SOL emails via a separate email sequence, which will be tracked separately.

Launch #2: Bryan Harris’s Slingshot System

The only people who will receive the Slingshot launch sequence are:

- Existing subscribers earmarked to Launch 2 on January 9th.

- Any new subscribers the Slingshot system brings in during the launch period.

Observers get their own copy of the Slingshot sequence.

Launch #3: Ramit Sethi’s Zero to Launch System (“ZTL”)

The only people who will receive the ZTL launch sequence are:

- Existing subscribers earmarked to Launch 3 on January 9th.

- Any new subscribers the ZTL system brings in during the launch period.

Observers get their own copy of the ZTL sequence.

What about people who weren’t on your list and opted in after they heard about this experiment from a friend?

I have a small(ish) but loyal list of subscribers, who go out of their way to help me. (Plus dedicated trolls who send me hate mail once a week like clockwork.)

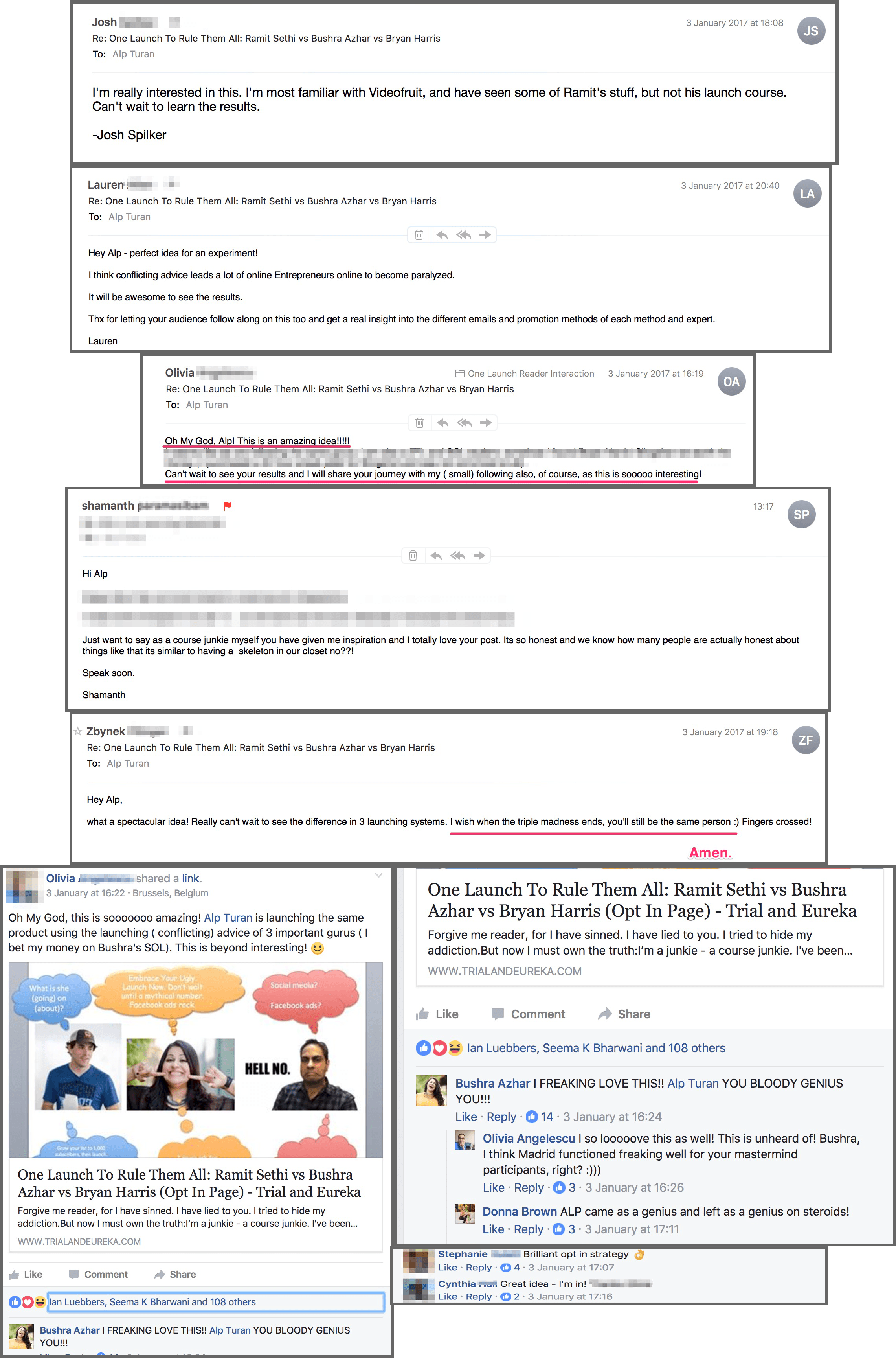

Here is what happened:

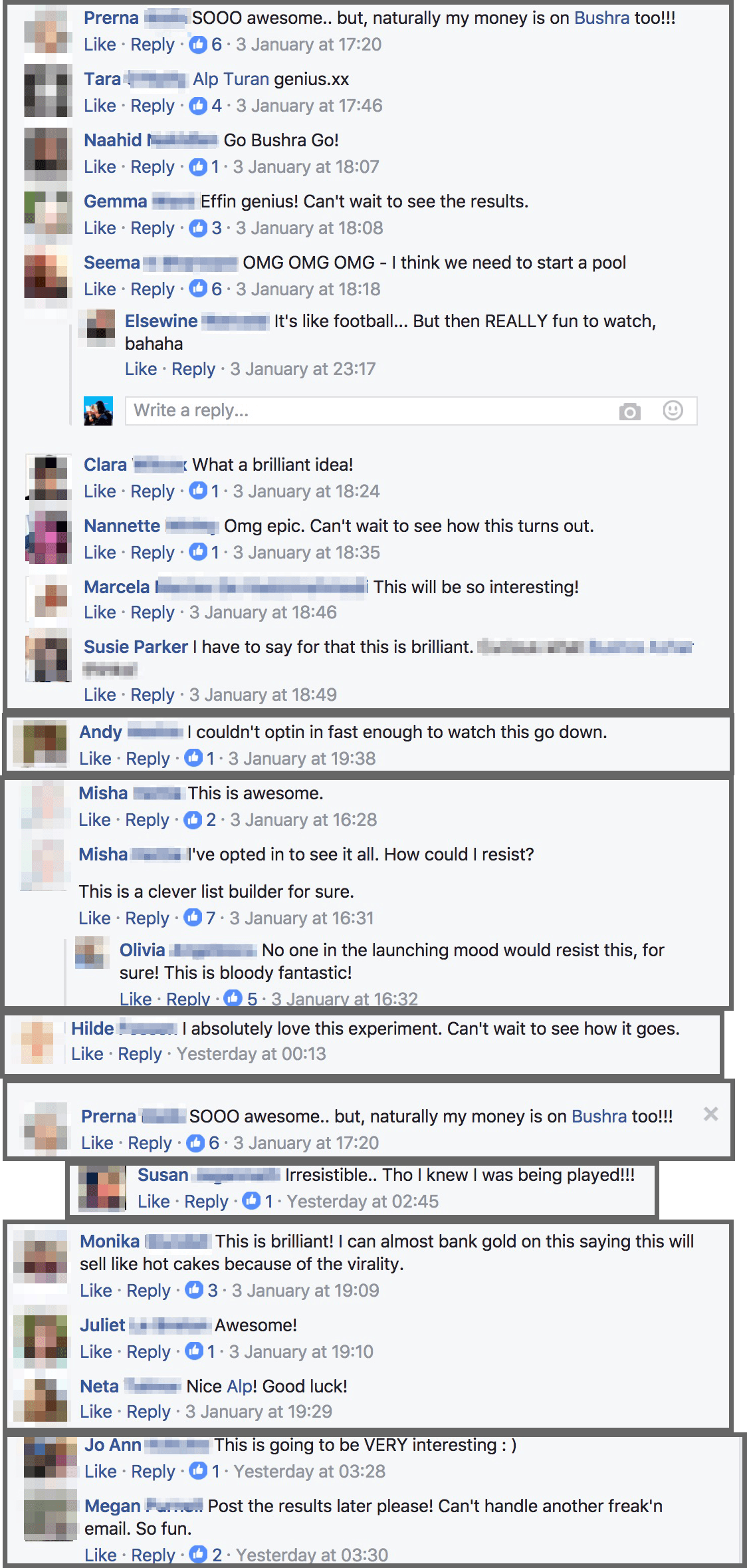

I emailed my list about this experiment at 3 pm Istanbul (7 am EST) yesterday. Then I hopped on a plane to surprise my mom for her birthday and flew to Izmir. My grandparents came over, we celebrated, then I went to sleep.

My existing subscribers must have shared the link with their friends, because this is what I woke up to the next morning:

- 65 FB notifications;

- 14 love notes in my inbox;

- 6 FB messages; and

- 270 new subscribers who joined my list to watch the experiment.

So within 22 hours of the broadcast going out to my list, my subscribers brought in 270 subscribers by word of mouth. And the number is still growing.

So what happens with those new subscribers?

Short answer:

If you weren’t on my list on 02 January 2017, the only way you could have joined the launch was through this page. Anybody who opts in there is automatically tagged and earmarked to a launch based on their choice.

Long answer:

Ok, so this gets a bit boring and technical. Feel free to jump ahead to the next section.

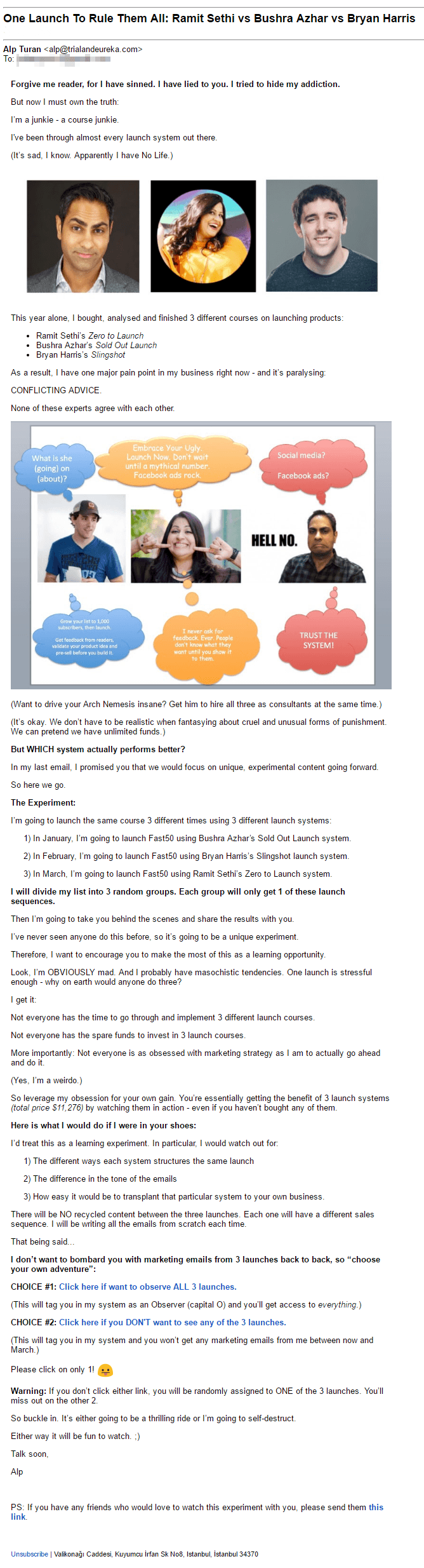

If you were on my list on 02.01.2016, you got this email from me yesterday:

That email gave you three options:

- Click to observe all 3 launches.

- Click to get “marketing immunity” so you don’t receive any launch emails until April.

- Do nothing and I’ll randomly assign you to ONE of the three launches.

Clicking the first link took you to this page:

Clicking the second link took you to this page:

At the bottom of the email, there was a line that asked you to spread the word:

That line links to this opt in page.

Let’s recap:

There are 3 doors into this experiment:

- Route #1: You received an email about it from me.

- Route #2: You heard about it from a friend.

- Route #3: You read about it on the blog.

Regardless of which “door" you enter through, you get the same three choices: Observe all 3 launches, observe just one launch, or don’t observe anything.

I just have to adjust my marketing automation to cover all 3 doors, so nobody falls through the cracks.

Here is what the marketing automation looks like for each route:

Route #1: Email to list (for existing subscribers)

GIST: Anyone who is currently on my email list will be segmented through this route.

So the automation flow looks like this:

- Email to list → "Click here if you DON'T want to see any of the 3 launches” → Tag "Marketing Immunity” → Clicking sends to “Immunity Page”

- Email to list → "Click here if want to observe ALL 3 launches" → Tag "Observe all 3 launches" → Clicking sends to “All 3 launches Page”.

- Random assignment. —> List - (Marketing Immunity) - (Observers) = Random Pool. On January 9th, divide Random Pool into 3. Tag “Fast50 SOL Launch”, “Fast50 Slingshot Launch” and “Fast50 ZTL Launch” respectively.

Route #2: Optin Page (for word of mouth referrals)

GIST: Only new subscribers will be coming in through this route. For example, subscriber Sack shares the “One Launch Opt in Page" link with his friend Martha, who then joins my list.

- Opt in Page —> If they don't want emails about the launches, they just do nothing.

- Opt in Page —> "Opt in to observe all 3 launches" → Tag "Observe all 3 launches" → Clicking sends to “All 3 launches Page”.

- Opt In Page —> “Opt in to be randomly assigned to 1 launch” —> Form & Tag “Random" → Clicking sends to “Random Page”:

Route #3: The Blog (for a safety net)

GIST: This creates a “safety net” to catch random visitors who happen to browse my blog during a launch but weren’t otherwise “caught” by my launch marketing.

For example, Jack browses my blog and sees this blog post:

He clicks on it and is taken to the opt in page. He thinks it’s a cool experiment and opts in.

The point is: Jack wasn’t referred by any of my existing subscribers (Route 2) and didn’t come through the launch marketing (FB ads etc).

Automation flow:

- Clicks article on the blog page—> Gets redirected to Opt-in Page —> Thereafter, the flow is as per Route 2.

What about people who mischievously clicked more than one link / opted into both forms?

4 people couldn’t make up their minds.

They asked to eat their cake and have it too. They opted into BOTH forms, which means they wanted to be randomly allocated to just one launch AND to see all three launches.

We have a saying for that in Turkish:

Yerçekimsiz ortamda muz yiyip çilek tadı almak istiyorum.

Which roughly translates to: I want to eat a banana in Zero G but taste strawberries.

We can set up automation to deal with this:

Anyone who has more than one tag (because they click more than one link or opt into to more than one form)** gets automatically added to a “Please make up your mind” sequence.

**(Four possible permutations: “Immunity + Observer”; “Immunity + Random”; “Observer + Random”; “Immunity + Observer + Random”)

Then my autoresponder will start bugging them on a daily basis until they commit to one option.

So, that is how I plan to isolate the three launches from each other in order to get reliable data.

Let’s move on to our next issue.

Issue #2: How to do three launches back to back without burning out your list?

What you DON’T want to do is to shove it down people’s throats without asking them.

That’s like surreptitiously injecting all your friends with an experimental drug and hoping nobody notices.

So what I did instead was to be transparent about my intentions and ask people how they wanted to be treated:

- Do you actually want to receive marketing emails from me?

- If not, I’ll give you “marketing immunity”, which means I won’t sell you anything until April.

- If yes, do you want to observe all three launches or just one?

Here is what happened:

UPDATE: As of 04.01.2016 (25 hours after the email)

- 103 subscribers chose to observe all 3 launches. They essentially asked to be marketed at three times in a row.

- 0 subscribers asked for marketing immunity. 21 readers unsubscribed.

- The rest of my list will be divided into 3 random groups on Jan 9th.

All of these subscribers then told their friends about the experiment, which brought in 270 new subscribers.

Of those 270 new subscribers:

- 264 asked to observe all three launches.

- 6 asked to be randomly allocated to just one launch.

I find these statistics bizarrely fascinating. These are 264 complete strangers who have never heard of me before asking to be marketed at - not once, but three times in a row.

Wait… but why?

Doesn’t every marketing expert tell you that people hate being sold to?

Frankly, that’s just bullshit.

People hate being sold to, but they love to buy.

You just have to catch the right people (serious buyers not freebie hoarders) at the right time (when they are in a buying mood) and sell them the right product.

Therefore, I advocate buyer-building over list-building.

But why exactly should you focus on building a list of buyers (rather than just subscribers)?

In Call to Action: Module 1, Ramit Sethi states that a good starting benchmark is a 0.5% list conversion rate.

In other words, if you have 1000 subscribers and make 5 sales (0.5% list conversion), that means you need 200 subscribers for each sale. So every buyer you get on your list is worth 200 “subscribers”.

Let’s look at the experiment data from this perspective:

Designing an optin that gets you 270 new prospects who explicitly ask you to sell to them has the same effect on your bottom line as getting 54,000 new subscribers (270 x 200).

Personally, I would rather spend 48 hours to design an optin offer that gets me 270 prospects now, rather than spend 2 years list-building to get 54,000 subscribers.

Question is: How exactly do you catch serious buyers when they are in a buying mood?

To do that, you need to strategically design an optin offer that attracts your ideal buyer like the scent of freshly baked bread while repelling freebie hoarders like an IRS audit.

(A freebie hoarder is someone who will never buy from you. They just downloads stuff left and right because it’s free without any serious intention of actually implementing it.)

It’s a bit difficult to explain this in the abstract, so let’s use my own business as an example:

I have a mini-course on optin offers that convert at 50%+ called Fast50.

My ideal buyer for that course is:

A small (online) business owner who is on the cusp of launching their first or second offer. She is not a complete beginner; she’s been in business for 6-18 months. She has taken other business courses before, she knows what an optin offer is, but she just isn’t getting the traction she was hoping to get. So, she has to stalk other people’s FB groups for clients and growing her email list feels like SUCH a slog. Oh, and she also hates tech.

(Note: I say “she” not to introduce gender bias. I’m a dude, so I always tend to write “he”, which my gf hates. End of Equal Opportunity to Be Sold Stuff rant.)

Now, I didn’t wake up one day with that description fully formed in my head like Athena rising from the waves. When I beta-tested / validated / pre-sold Fast50 last month, I realised that this group bought more than any other group. Not only were they easier to sell to, but they were also more likely to get results from the course… which means I’ll get better testimonials and case studies… which in turn means Fast50 will be that much easier to sell in future launches.

Knowing this, I can adjust my marketing.

So, the challenge was to design an optin that would be irresistible to someone like my ideal buyer while being significantly less attractive to someone who isn’t serious about launching a product/service online.

My thought process went like this:

This experiment would be irresistible to anyone in a launching mood. They would love to see which launch system actually performs better. And anyone who is about to launch will need an optin offer. I have a course on how to build an optin offer that converts at 50%+ in 48 hours (“Fast50”). Bingo.

Therefore, most of the people who come in to watch me test the 3 launch systems on Fast50 would also be interested in Fast50 itself.

(Your opt in offer should naturally lead to your paid offer, so it can prime people for the sale.)

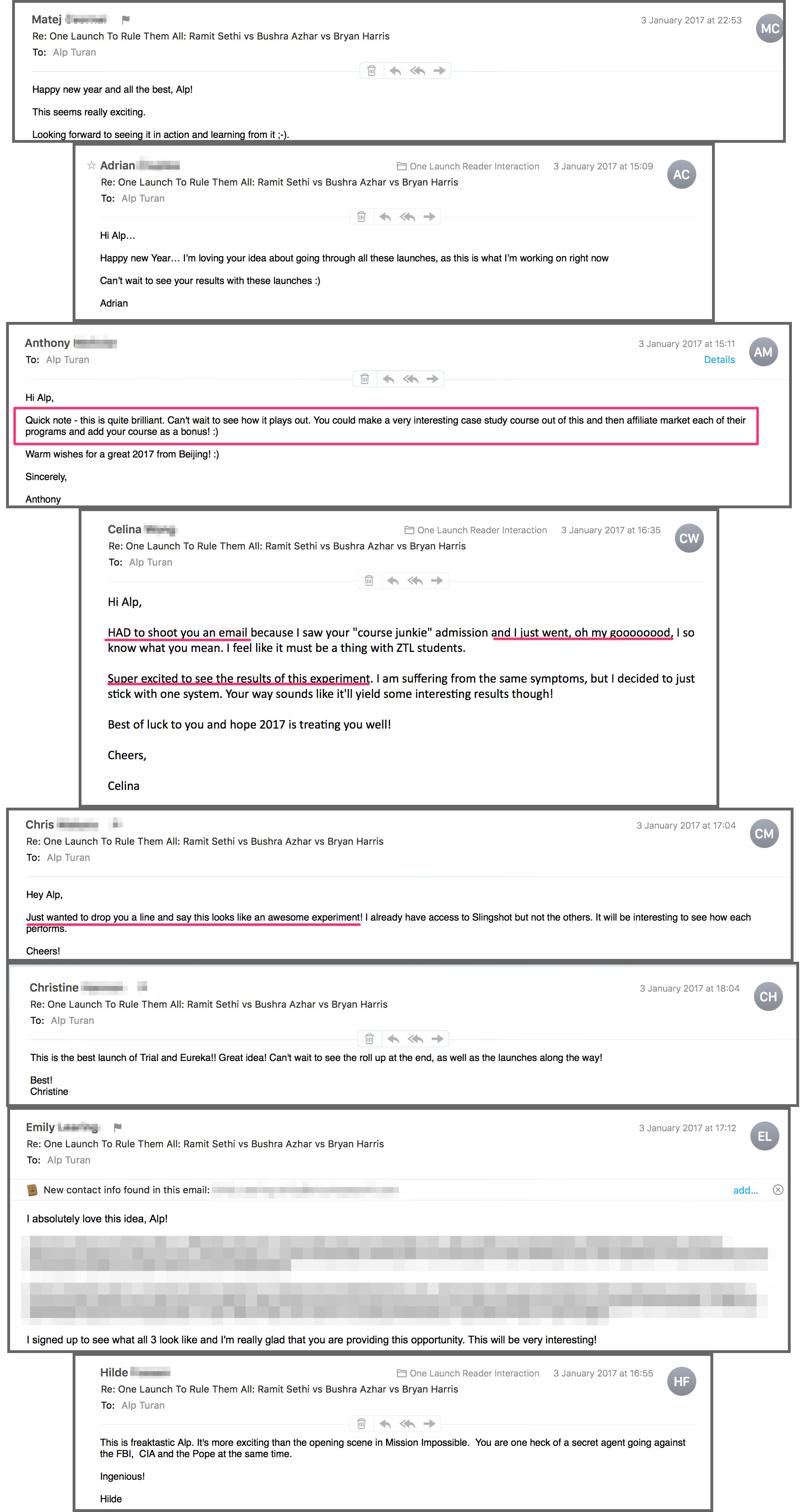

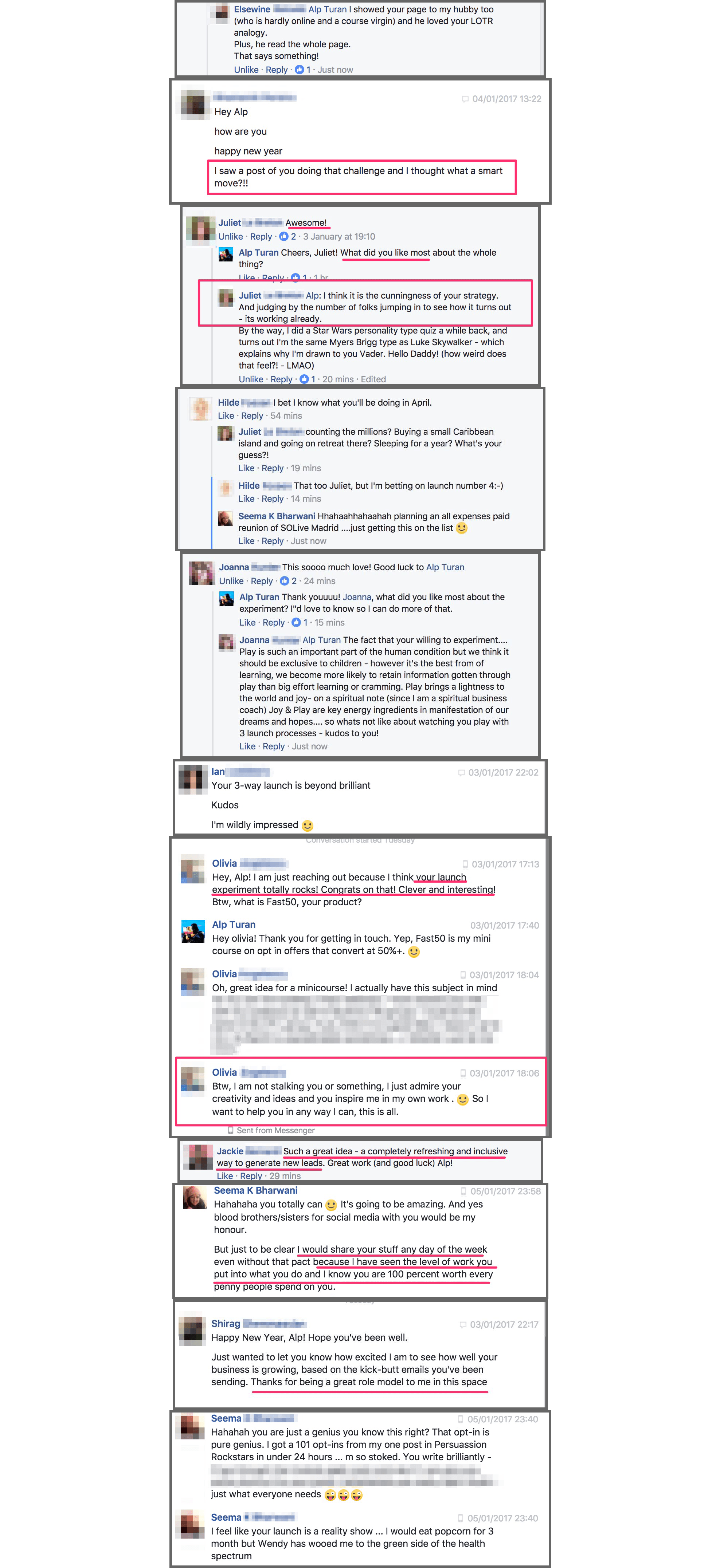

Based on the responses I’m getting, my hypothesis seems to be working:

Once you prove there is market demand for your product, the next step is to ask yourself: How can I prove that this will get them results? What kind of proof would people find most compelling?

The best way to prove something works is to show it in action.

If you want to sell bullet-proof glass, don’t tell people it can stand this much force. Give them a hammer and ask them to take a swing at it. Show it in action.

As they watch me launch Fast50 three times using different launch systems, prospects will be opting in through Fast50-style optins, so they get to watch Fast50 itself in action. Meta, right?

There is also an added benefit to doing this. It proves that Fast50 is compatible with these each of these three experts’ launch systems. This allows me to counter the sale objection around conflicting advice before buyers can raise it: “Yeah, but I’m following Ramit Sethi. Will your stuff on optin offers actually work for a ZTL-style launch?"

Issue #3: How to increase open rates and reduce unsubscribes during your launch?

The logic is really simple: I respect people’s wishes. I let my subscribers “choose their own adventure”.

My hypothesis is:

- Anyone who asks to observe my launch will actually want to read my launch emails. Therefore, they should be more likely to open the emails and less likely to unsubscribe during the launch.

- Anyone who isn’t interested in this launch can opt out of my marketing, instead of unsubscribing from my list when they receive a sales email they don't want. The thing is: They wouldn’t have bought anyway. At least this way they stay on my list, so I can sell them a different product later on.

Key Takeaways

Here is a recap of what I learned from this experiment so far:

- Focus on buyer-building NOT list-building. If you build a list of buyers from the get-go, you’ll have an easier time selling.

- You build a list of buyers from the get-go by strategically crafting optin offers that attract your ideal buyers and prime them for the sale.

- If your list responds poorly to authentic attempts at marketing your products and services, you might have built a list of non-buyers. If that’s the case, don’t be too hard on yourself. Reframe your self-speak from “I’m a failed business owner” to “Wow, I’m glad I found out about this today rather than next year. Now I can shift gears."

- You can avoid burning out your list by giving them a choice to opt out of your marketing if it’s not in their best interest to buy your product at this time. Confidence in your offer translates to confidence in your marketing.

- Analyse the people who opt out for marketing insights:

- If they’re not your ideal buyers, you need to adjust your marketing so you no longer attract people like them. Think about what makes them non-buyers.

- If they are ideal buyers but they aren’t yet at a stage where they can benefit from your offer, that means your marketing isn’t catching them at the right stage of their journey.

- If they are ideal buyers and they need what you’re selling BUT they don’t realise they need it, one of two things might be happening:

◦ 1) You might be addressing a NEED not a WANT, which is why it doesn’t sell. (i.e. you built the wrong product or positioned it incorrectly). OR

◦ 2) You need to educate them and make the connection between your product and their problem explicit.

- Finally, if you built a list of buyers and you have a product they actually want, you might be surprised by how receptive they are to your marketing when you give them the choice to opt out.

Next Steps

Next week, I’ll write a "Q&A” style post where I answer all your questions regarding this experiment. (Here is a sneak peek.)

If you think this is a cool experiment and would love to take a more active part in it, here are two things you could do:

Thing #1: Send me any questions you have about the experiment. I’ll answer them in a blog post next week. You can post your question as a comment below.

Thing #2: If you have any friends who would love to watch this experiment with you, please send them this link.

Opt in here if you want to observe ALL 3 LAUNCHES:

OR...

Opt in here to be randomly assigned to ONE of the 3 launches. You'll miss out on the other two.